Supervised by: Sanjan Das. Sanjan is a final year Engineering student at the University of Cambridge. Over his degree, he has specialised in Bioengineering and Information Engineering. He is set to begin an MS in Artificial Intelligence and Innovation at Carnegie Mellon University, U.S.A later this year.

Abstract

There is no doubt that AI technology will play a key role in the future, but it is already changing lives today. One field that AI technology has already heavily impacted is the field of education. AI technology has helped students study more effectively, helped struggling students catch up to their peers through personalized learning, and most of all, made high-quality education far more accessible to students with limited financial resources. AI Chatbots have quickly become one of the more popular AI tools used in the field of education; students are using AI-powered software such as Quizlet and Kidaptive to learn and study more interactively and effectively.

This paper dives into machine learning, deep learning, supervised learning, natural language processing, data collection and preparation, training, long short term memory, evaluation, and deployment to lay the groundwork for discussing our detailed proposal: SCIENTIFIC. SCIENTIFIC is an AI-powered learning platform that seeks to deliver the Science curriculum in an engaging and fully interactive manner. SCIENTIFIC provides a video-based curriculum that incorporates virtual reality and an AI, deep learning, NLP chatbot to keep users engaged and learning effectively.

1. Introduction

1.1 Why Chatbots?

The simplest way to define a chatbot is as a machine or a tool that converses with a human in a natural human language.

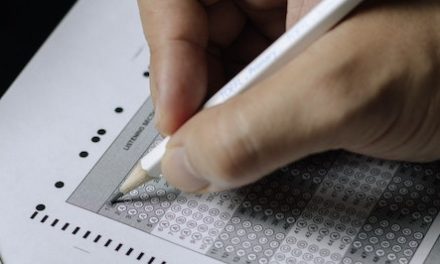

The obvious question here is why would people use chatbots when they could chat with another person? To put it simply, chatbots are often preferred because of their intelligent technology, affordability, and ability to maximize efficiency. Essentially, chatbots exist to ease humans’ monotonous workloads, thereby allowing humans to shift their focus to more important aspects of a job (such as the ‘bigger picture’ decisions). Currently, chatbots are being used as personal assistants, as the first line of customer service (as seen in Figure 1), and even in the field of education as teaching assistants! To understand how chatbots are able to take on such roles, one must first gain an understanding of the technical aspects of chatbots, artificial intelligence (AI), machine learning (ML), deep learning (DL), supervised learning, natural language processing (NLP), data collection and preparation, long short term memory (LSTM), and training.

Figure 1: A Customer-service Chatbot [6]

1.2 Technical Aspects

The way a chatbot simulates interactive human conversation is by using key pre-calculated user phrases and auditory or text-based signals.

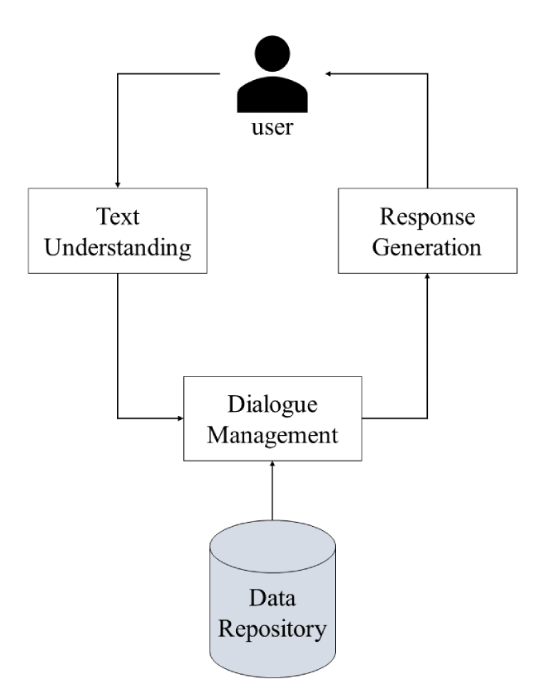

Chatbots consist of 4 main modules: a text understanding module, a dialogue manager, a text generation module, and a database layer that holds the various types of information needed for chatbot training and function.

Figure 2: Chatbot Modules [19]

a) Text Understanding

The text understanding module is the one with which the user directly interacts. The function of the text understanding module is to extract meaning from the user input before a specific answer is generated. Usually, a pattern matching method is used where keyword or string matching occurs, meaning specific keywords or strings the user has input are matched to scripts stored in the database. Supervised machine-learning algorithms that include decision trees and random forests are also used.

b) Dialogue Management

The input to the dialogue management module is the processed text input provided by the text understanding module. The dialogue management module controls the different aspects of the conversation and links each user input to an appropriate output. There are two types of dialogue management: static dialogue management and dynamic dialogue management. In dynamic dialogue management, the context of the conversation changes based on specific user input characteristics. The switching of context can be done by training ML algorithms to identify context from user input. In static dialogue management, the context of the conversation does not change throughout, and the responses produced by the AI are all based on predefined topics from a particular database.

c) Text Generation

The text generation module provides output to the user. Text generation either uses fixed output or generated output. Fixed output methods search the database for the most appropriate output for a user’s input and present it to the user. The generated output method, on the other hand, relies on ML to generate original natural language output that is produced by the ML algorithm. This output is far more advanced and user-friendly as it provides users an alternative to the usual daunting textbook definitions.

d) Database

All data are stored in a database as shown in Figure 2.

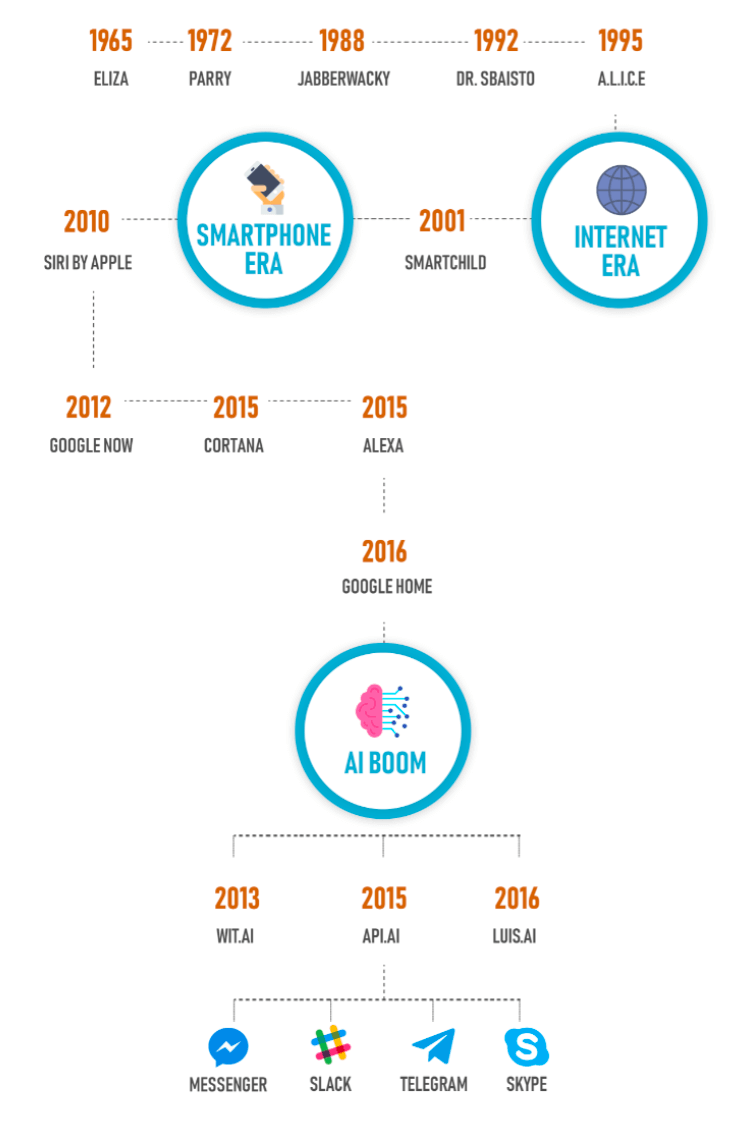

2. The Evolution of Chatbots

2.1 Early Stages

Joseph Weizenbaum had developed the first chatbot in 1966, which is known today as “ELIZA”. The main purpose of this technology was to make human interaction as realistic as possible using ML. However, because pre-written scripts were used, these chatbots were extremely generic and were limited to a certain scope or topic. Because of such limitations, society has now shifted towards data-driven AI models. Though data-driven models require large amounts of data to be trained with, they are more adaptable and can easily venture past the boundaries of a sole topic. Collecting the necessary data to train data-driven models is surprisingly easy as it is widely available in the form of public conversation data from social media, forums, Q&As, microblogs, chat sites, and many more internet-based human-to-human interactions.

Other chatbots like “Perry” and “A.L.I.C.E” focused more on human paranoia and emotions. However, many of these early chat boxes had trouble passing the “Turing Test”, the ability to replicate a human’s intelligence. The level of technology was one of the major reasons that creators could only achieve their objectives at a bare minimum.

2.2 Growth of Chatbots

Over the past decade, the chatbot usage has grown exponentially. This is primarily because new popular messaging apps have become so reliant on these machines to take their companies to the next level. These machines are more incorporated into people’s lives today than ever before. WeChat, an app revolutionized by chatbots, has created shortcuts for people to book flights, find restaurants, and even allow transactions.

Figure 3: Chatbot Evolution Timeline [17]

Improvements in Chatbots:

- Higher Application to Everyday Life

- Advanced Platforms for Better Performance

- More Realistic Human Interaction (Customer Services)

- Higher Versatility/Easier to Access

- Effective Tool for Business Expansion

3. AI learning and NLP

Because most machines today are data-driven, Deep Learning and NLP play a key role in how such machines learn.

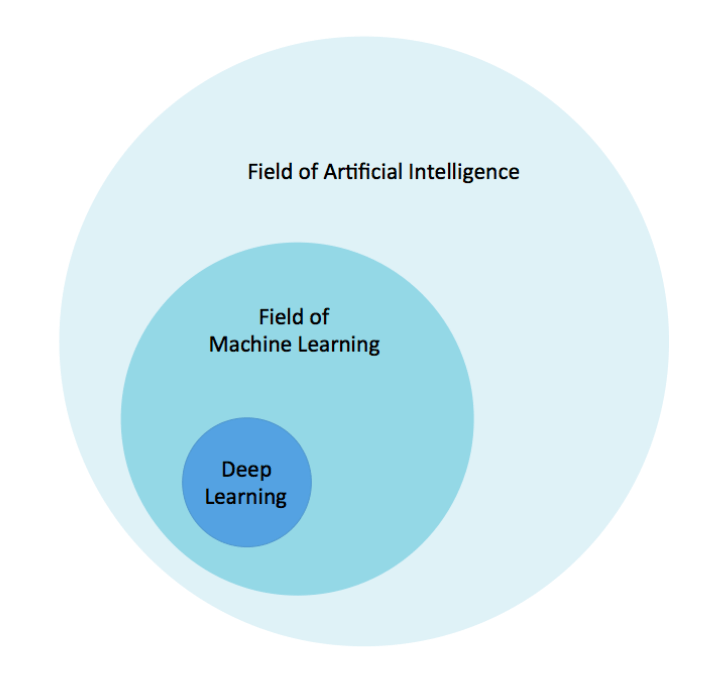

3.1 AI, ML, and DL

Figure 4 represents the relationship between artificial intelligence (AI), machine learning (ML) and deep learning (DL).

Figure 4: AI, ML, and DL [12]

AI is a technique that enables machines to simulate human intelligence and mimic human behavior, whereas ML is a technique to achieve AI through algorithms trained with data. DL is a subset of ML that is inspired by the human brain and its structure.

DL machines are more independent than ML machines in that once they are trained with data, it would be fairly accurate to say that they learn on their own through their own method of computing. That being said, DL machines require a higher volume of data for training than ML machines.

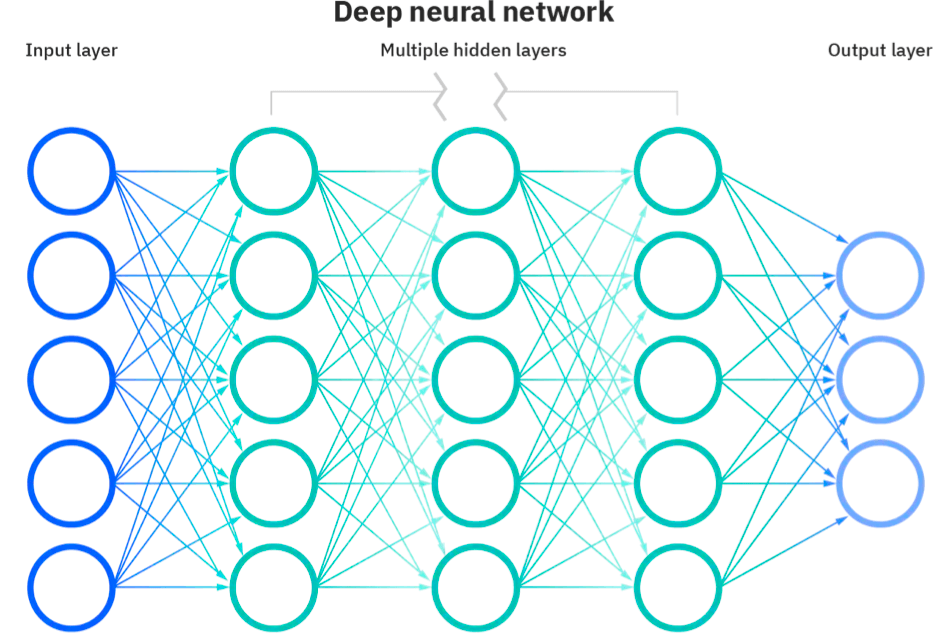

Just as its definition states, DL is inspired by the structure of the human brain. So, similarly to the way humans learn from experience and there are neurons in the human brain to transmit and process information, DL machines use artificial neural networks, also composed of neurons, to allow machines to learn from data. These multi-layered neural networks are where the information processing takes place in DL machines.

Figure 5: Layers of a Neural Network [10]

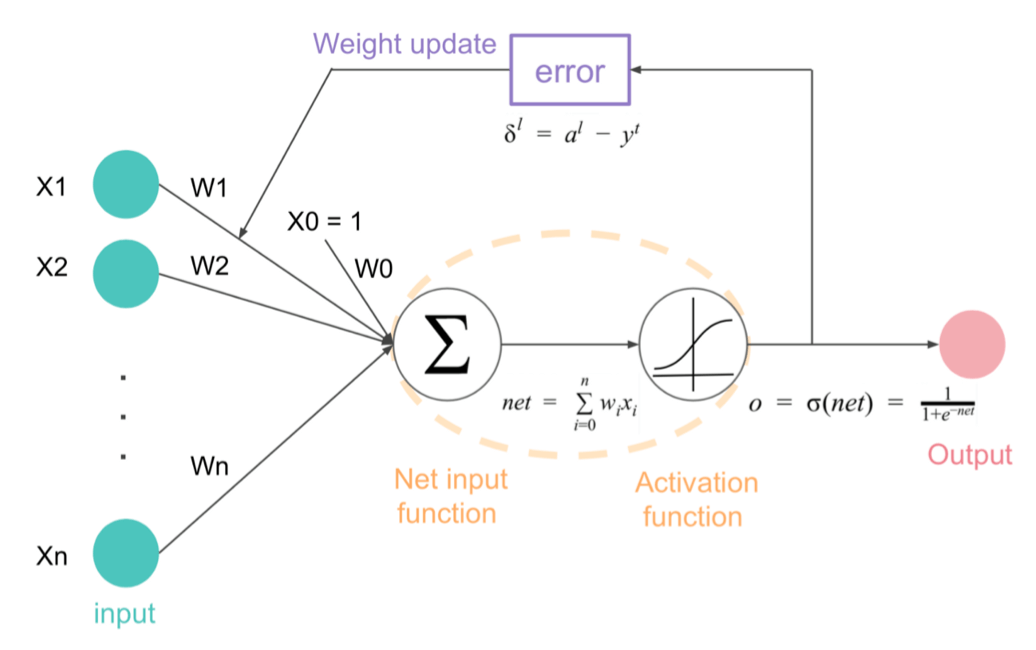

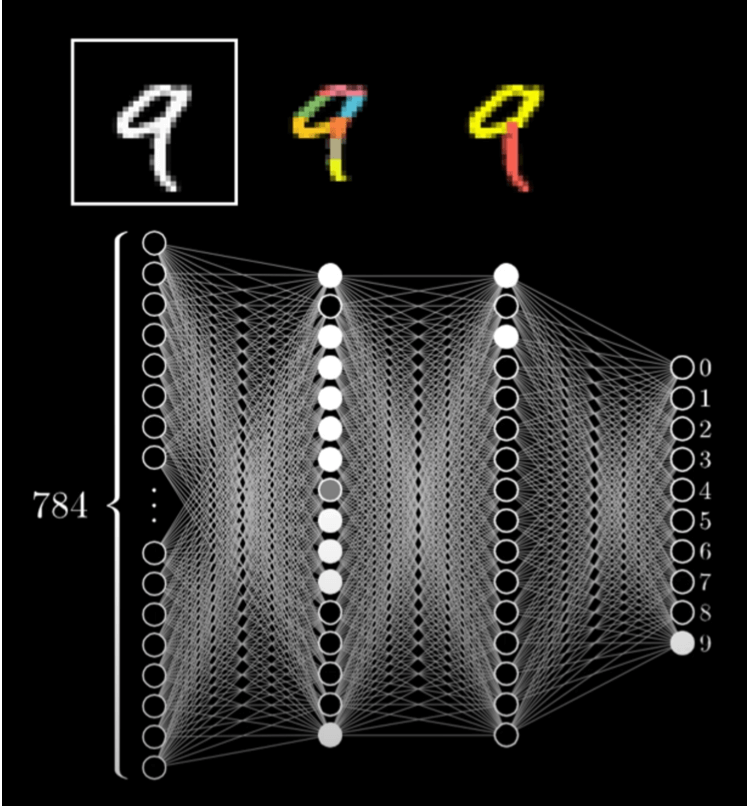

In every neural network, there is an input layer, an output layer, and then multiple hidden layers in between (see Figure 5). The way neural networks work is almost like a string of sieves (yes, the ones used for baking). Data goes through the layers, with each layer filtering out options, thereby leading to a single output at the end. The way that information flows through or is transmitted from one layer to the next is through connecting channels. Each channel has a weight, or a value, attached to it. Additionally, each neuron also has a unique number associated with it called a bias. The bias is added to the weighted sum of inputs reaching the neuron, which is then inputted into a function known as the activation function, the result of which determines if the neuron gets activated (see Figure 6).

Figure 6: Neural Network Calculations [20]

Every activated neuron then passes on information to the next layer of the neural network. This process continues until the second last layer of the network. Suppose the purpose of the neural network is to recognize a handwritten number nine. If the process is successful, there will be one activated neuron in the output layer, which would correspond to the digit “9”, and the network (in a simplified state and without the calculations) may look something like Figure 7.

Figure 7: Simplified Neural Network Showing Activated Neurons in Each Layer [2]

The way that DL machines learn or get better at a task is by using backpropagation, a method of fine-tuning weights based on the error rate of the previous iteration, thereby reducing error rates with each iteration. By continuously adjusting the weights and biases, a well-trained network is produced.

Application: DL machines have a wide range of uses. For example, DL can be applied, through AI chatbots, to be the first line of customer support, or it can even be used in the medical field. Regarding the latter example, DL technology and neural networks can be used to detect cancer cells or analyze MRI images to give detailed results.

Clearly, DL has a vast scope and is an extremely efficient way to deal with unstructured data; however, there are a few limitations that must be considered. Some of the biggest limitations of DL today are listed below.

- Large volume of data: The hard task when considering data collection (for DL machines) is to make sure there are enough examples for each parameter but not so many as to achieve overfitting when a model performs well on the training data, but it cannot generalize well on new, unseen data. Though the amount of training data needed largely varies with the difficulty of the problem, the number of examples for training DL machines can range from 10,000 to 1,000,000.

- Computational power: Training a neural network requires graphical processing units (GPUs), which have thousands of cores, compared to central processing units (CPUs). A core is like the brain of a CPU: it receives instructions and operates to satisfy such instructions. The issue with needing GPUs is that not all computers have the storage nor processing capabilities to accommodate massive amounts of training data (that are needed to train a good model). In addition, GPUs are more expensive than CPUs.

- Training time: The time required to train neural networks also acts as a limitation on DL, as a DL machine can take hours or even months to train. The time required to train a neural network increases with the amount of data and with the number of layers in the network.

3.2 Supervised Learning

Before a machine can understand any and all data it receives, it must be fed labeled data so that it learns to classify and process data using machine language. Supervised learning is the process in which a person observes an ML model, which precedes the unsupervised learning period. The overarching difference between supervised and unsupervised learning is that supervised learning teaches a model using labeled data sets; in other words, the type of data is known.

There are two types of supervised learning algorithms used for prediction in ML and when working with labeled data sets: classification, the organization of labeled data, and regression, the predictions of trends in labeled data to determine future outcomes. In classification, the goal is to find the decision boundary, which then divides the data set into different classes. Classification algorithms can be used to identify and classify things from cancer cells to spam emails. On the other hand, in regression, the goal is to find the best fit line, which can then more accurately predict an output. Regression algorithms can be used to predict things such as house prices or the weather.

3.3 Natural Language Processing (NLP)

Despite mispronunciations, accent changes, slang, slurs, and even homophones, humans can still understand each other. Well, now, with the help of NLP, machines can almost do the same!

Humans can remarkably understand context. For example, if a person is making an appointment, “date” is most likely referring to the calendar date, not the fruit; however, to regular computers, the word would simply be 1s and 0s, and the computer would not be able to differentiate between the two definitions as it would simply understand the word on its own, rather than understanding the word and the words around it.

But not anymore. NLP and AI have revolutionized computer software. With NLP, machines can use context clues to better understand humans; some examples of NLP machines include Google, which uses NLP to predict results as users type (see Figure 8), and Apple, which uses NLP to suggest relevant words to finish users’ sentences. Moreover, NLP can also help solve much more threatening problems such as crimes and diseases by identifying patterns, clues, and microscopic details in data.

Figure 8: How Google uses NLP [5]

Clearly, NLP can improve our lives manyfold, but how does such technology work? Well, the key lies in understanding context, and many different components give NLP the ability to do so.

While it may have been hard for old computers to decide whether the word “bank” was referring to a building that stores money or the land next to a river, an NLP-powered computer can easily differentiate the two using the thousands of sentences and different word patterns it has analyzed in the past to conclude that it is far more common for a person to mean they are depositing money at a bank, where “bank” refers to a financial institution.

Furthermore, whereas a regular machine might be unsure whether a person means leaves, like those in a park, or leaves, as in a person exiting a place, NLP-powered machines can quickly differentiate between the two using part-of-speech tagging. Take the following sentence: “The woman leaves the car park.” Part-of-speech tagging allows NLP machines to understand that “leaves” acts as a verb in that sentence, and therefore the user means leaves as in exiting, not as in those of a plant.

Data-based probability and part-of-speech tagging and chunking are just two of the many ways in which NLP machines can contextualize words in sentences or decide the precise meaning of a word using syntax and semantics.

Syntax is the set of rules that governs the structure of sentences, and it has subcategories such as tokenization, stemming, and lemmatization.

There are two types of tokenization: sentence tokenization and word tokenization. Tokenization is simply separating a paragraph into sentences or separating a sentence into words. Tokenization is a helpful tool as it allows machines to learn the potential meanings and unique purpose of each word.

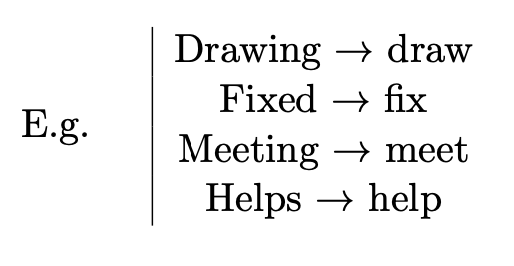

Stemming is the process of reducing a word to its root, or its stem, and is a way to chop universal prefixes and suffixes off of words.

However, sometimes stemming cuts off necessary parts of a word and thereby changes the meaning of the original word, a major fault of stemming, but one that can be fixed by lemmatization.

Lemmatization is the process of reducing words to their roots by morphological analysis. For example, if a machine detects the words “am,” “are,” and “is,” it can analyze the three words to conclude that the root form for all three is the verb “be.”

Semantics is the study of meaning in language and has subcategories such as named entity recognition and natural language generation.

Named Entity Recognition is similar to the aforementioned example of the word “leaves”; however, named entity recognition does not simply assign grammatical parts of a sentence to words. Instead, it allows machines to categorize specific words or phrases within a sentence as people, brands, time, organizations, and more.

Moreover, Natural Language Generation (NLG) is a process through which a machine uses mathematical formulas and numerical information to find patterns in data and then output understandable text.

In summary, NLP is what enables a machine to take user input, in the form of text, for example, and make sense of it. From there, the machine can formulate an appropriate statement of its own in response. Data-based Probability, Part-of-speech Tagging, Tokenization, Stemming, Lemmatization, Named Entity Recognition, and NLG are all components of NLP systems and, together, they allow computers to understand the context and meaning of a user’s words, or input.

3.4 Text-based Chatbots

NLP text-based chatbots use NLP to understand text input and respond accordingly. By using NLP, chatbots can essentially handle any question or statement that a user types in, even if the bot does not necessarily know the answer.

Amazingly, NLP and AI have evolved to be able to understand slang used in everyday conversations, analyze emotions from statements such as “I hate this product,” and quickly and efficiently perform a wide range of tasks!

3.5 Why do Chatbots use NLP?

The way that conversational AI bots work is the user inputs a question or statement into the machine, and in return, the machine produces a response. The conversational AI’s response can either be generated by a Retrieval-based model, one that finds existing appropriate responses from a pre-composed data repository, or a Generation-based model, one that organizes words or vectors to synthesize new responses. Further, for both single- and multi-turn conversations, a response can be produced solely based on a user’s input; however, because conversational AI bots usually engage in multi-turn conversations, the bot should take into account the context of the current conversation to produce a better response. Because understanding the context of input enables the technology to produce a better, or a more fitting response, many chatbots today use NLP.

4. Data Collection and Preparation Required for Chatbots

All data, or raw information such as facts, statistics, images, and essays, can be represented with 0s and 1s. In the real world, when a person observes physical things, those things become data in the person’s brain. In a Virtual Reality (VR) world, however, a person literally becomes surrounded by data, as data are the building blocks of everything in that simulated world. The process of collecting and preparing data to train a machine consists of three steps:

Step 1

Step 1 is to choose the right kind of data to train a machine with and to collect that data. The way to decide which kind of data to use is by looking at the problem, as the problem a person is trying to solve largely determines the type of data set one uses. After deciding what kind of data to use for training, there are two options: collecting data independently or using public data sets. To collect data individually, one can use a web scraping tool such as Beautiful Soup. On the other hand, if one opts to use public data sets, one can look on Kaggle, Reddit, or Github, where some users have provided public data sets.

Step 2

Step 2 is to format and process the data. To begin, it is important to format data to a file type that the user is comfortable and familiar with. Data usually comes in the form of a text file, a relational database, or a CSV (comma-separated values) file; however, there are file type converters that can easily convert a file to a different file type. The next task is to write a function to extract data, or to pull data from a data set into memory. The last task is to clean up the data by deleting instances in data where the value is empty or incomplete.

Step 3

The final step is to transform data into vectors, which are numerical representations of data –– words, images, videos, and more –– that DL systems can understand. After this conversion, the vectors can be fed into a neural network to initiate DL.

5. LSTM Model and Training

5.1 LSTM

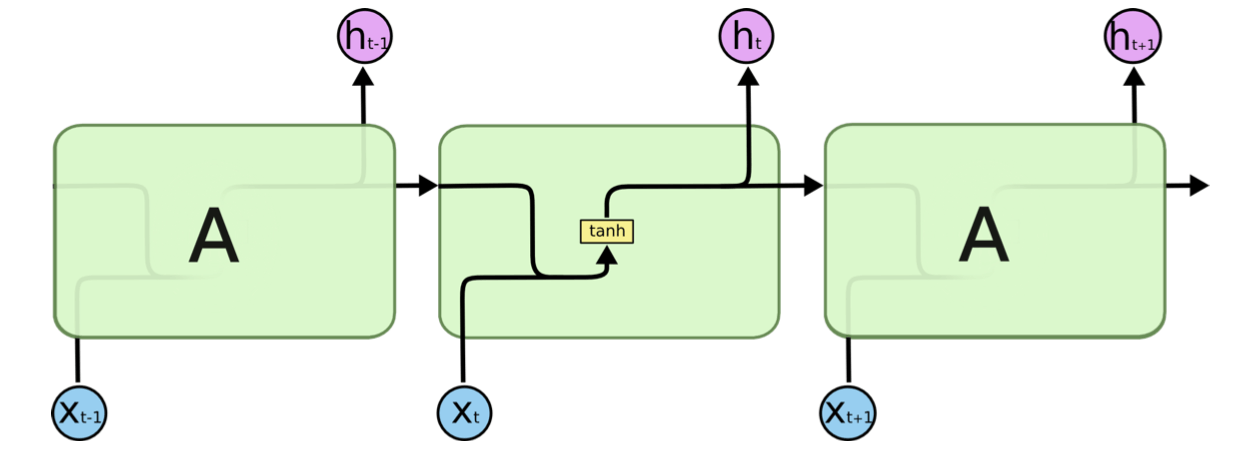

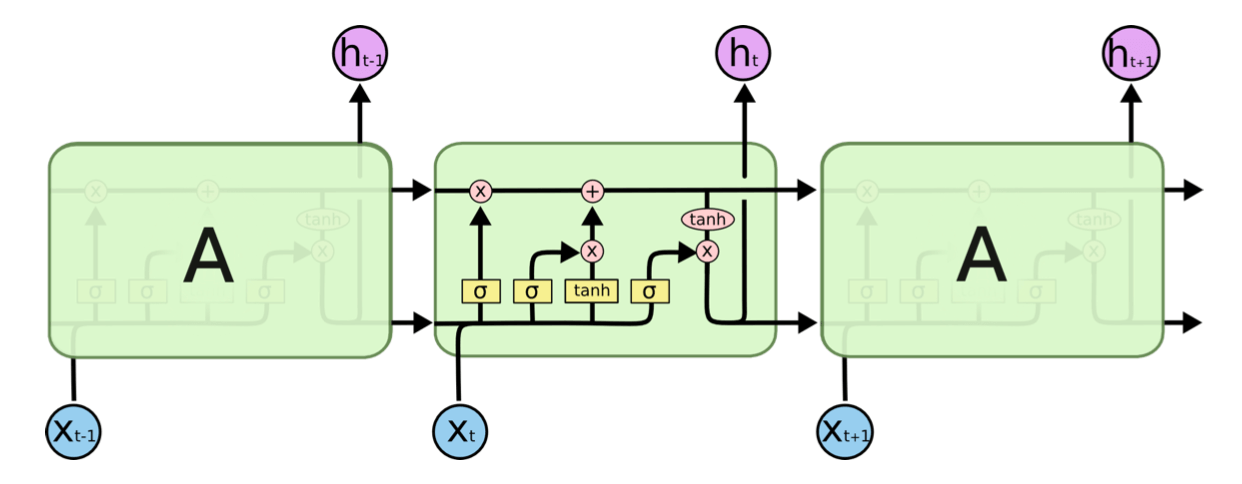

In order for the chatbot to be able to reply to responses from students in a human-like manner by drawing from its own memory, it needs an LSTM model. Long Short Term Memory (LSTM) is an artificial Recurrent Neural Network (RNN) that can retain necessary information and remove redundant information to maintain long-term memory. LSTM is used in ML for sequence prediction and other complex problems [18]. As seen in Figure 9, RNNs are networks that contain loops, allowing them to have short-term memory, which in turn allows them to store information that they can use in later operations. For example, if a user mentions that they play basketball and then later writes that they are going to take the weekend off to play…, the RNN can predict the next word to be “basketball”. However, simple RNNs have a major flaw: their short-term memory stops them from being able to use the information beyond a certain point in time. Because of this flaw, there are 4-layered LSTM modules, which are implemented more frequently and, as a result, are capable of learning long-term dependencies by using the same form of a chain of repeating modules of neural networks as the standard RNN. The only difference is the complexity of each repeating module. Each RNN has a simple structure, such as a single tanh layer; however, the LSTMs have four neural networks instead of just one (see Figure 10).

Figure 9: RNN Standard Repeating Module [18]

Figure 10: LSTM 4-layer Module [18]

The horizontal line at the top of the diagram is called the cell state (Ct), and it allows information to flow along it easily. The cell state only has two interactions that give the LSTM the ability to remove or add information, and that process is controlled by structures called gates. As their name suggests, the gates are a path that lets a certain amount of information through. They are made up of a Sigmoid Neural Network Layer and a Pointwise Multiplication operation. The purpose of the Sigmoid Layer is to output numbers between 0 and 1, with 0 being the most restrictive and letting no information through, and 1 being the least restrictive and letting all information through.

The LSTM model:

- Forget Gate Layer: This layer decides what information will be removed from the cell state by evaluating the hidden state of the Neural Network (ht1) and the input (xt), and then passing it through a Sigmoid Layer, which then outputs a number (ft) between 0 and 1.

- Input Gate Layer: This layer consists of two steps and determines what new information to store. First, a Sigmoid Layer determines what information will be updated, or replaced. Then, a tanh layer produces a vector of new, potential values that could be used instead, represented by (it ∗ Ct).

- The old cell state is now updated after the results of the previous steps have been put together. This is done by multiplying the old cell state by the information that is going to be forgotten (ft), thus forgetting that information. Then (itCt) is added, and these new values, which replace the old ones, are scaled by the extent to which one decides to update each value. So, the new cell state is represented by: Ct = ftCt−1 + itCt (where Ct−1 is the old cell state).

- Finally, the output is determined by creating a filtered version of the new cell state. This filter consists of a Sigmoid Layer and a tanh layer. The Sigmoid Layer determines which parts of the new cell state will be output from the cell state. That is then multiplied by what goes through the tanh layer, which just yields values between -1 and 1. The output of the Sigmoid Layer is represented by (ot), the output of the tanh layer is represented by (tanh(Ct)), and the final output is represented by (ht). So, ht = ot tanh(Ct).

Implementing LSTM: LTSM is used in chatbots to allow them to retrieve the most fitting response to a given question based on intent and semantic similarity (refer back to section 1.5.2 for more on Semantics). LSTM is a part of ML as it allows machines to retain the knowledge they need over time and teaches them how to respond to normal human speech, in turn making LSTM machines more adaptable. In terms of educational chatbots, being able to pick up on previous user input and later using it to formulate a more suitable and personalized response is important and is a feature enabled by LTSM.

5.2 Training

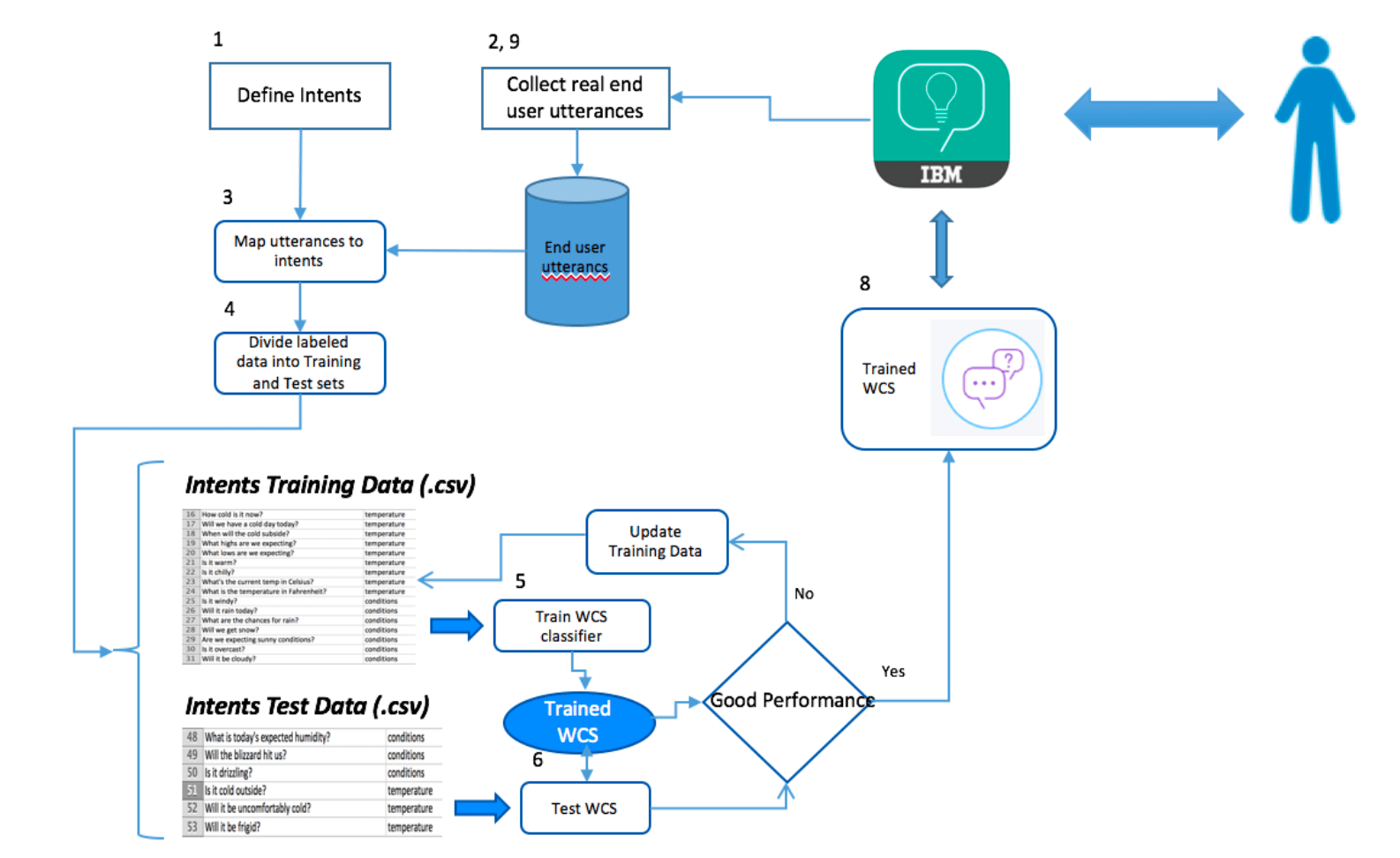

For a chatbot to understand the many ways in which users ask questions, it must be trained. The first step to training is setting a specific purpose for the chatbot, for example, asking students questions on their course material and then guiding those who arrived at the wrong answer to the right one. After determining the chatbot’s purpose, the following will need to take place:

I. Defining the intents

In this case, the common intents would be ShowCorrectAnswer, ExplainAnswer, etcetera, as these are the things that users would intend (the meaning behind their responses) when they reply to the chatbot after it alerts them that their answer was incorrect.

II. Collecting real utterances from users

After coming up with a good amount of utterances that predict what a user might say next, the process of collecting real data from real users begins. One of the techniques that can be used to collect this data is crowd-sourcing. Because this particular example is based on students, approaching a school and asking them to allow their students to participate might be a good idea.

III. Assigning utterances to intents

The collected utterances (from step 2) should be paired with the intents that were established at the beginning (in step 1), with the help of subject matter experts (educational experts-teachers) to make the process easier. The utterances with no clear intent can be linked to an “other” intent so that the chatbot can deal with off-topic responses.

IV. Dividing the data

Next, the paired utterances need to be prepared for testing. This preparation is done by dividing the utterances into two sets –– 70% training data and 30% test data.

V. Training the chatbot

By using the now-divided data set, an NLC classifier will train the data. “NLC utilizes an ensemble of classification models, along with Unsupervised and Supervised Learning techniques, to achieve its accuracy levels. After the training data is assembled, NLC evaluates the data against multiple Support Vector Machines (SVMs) and a Convolutional Neural Network (CNN) using IBM’s Deep Learning As a Service (DLaaS)” [25].

VI. Running the test data

After training, the test data set is run against the trained chatbot. During testing, performance metrics such as accuracy, precision, and recall should be collected.

VII. Analyzing error

After running all of the test data, the next step is to review the results and understand why the chatbot failed to respond to certain inputs. After doing so, one should update the training data and begin the second round of training.

VIII. Keeping developing

When the chatbot is in use, one should constantly be collecting user utterances and intents, as well as correcting the chatbot’s mistakes so that it can continue to become more advanced and comfortable with dealing with all sorts of responses.

Figure 11: The Process of Training a Model [25]

6. Evaluation and Deployment of a Chatbot

6.1 Evaluation

After a chatbot completes training, it is analyzed to reveal how it has impacted users. This analysis uses a few key metrics, such as the following:

- Self-service rate: the % of people accessing the website and not using the chatbot.

- Performance rate: the number of correct answers inputted by users divided by the number of active user sessions (sessions in which the chatbot was used).

- Usage rate per login: the number of active user sessions divided by the number of sessions on the website.

- Bounce rate: the number of sessions in which the chatbot window was opened, but the student did not use it.

- Satisfaction rate: the average satisfaction of the students and how they found working with the chatbot

- Average chat time: the average time spent interacting with the chatbot (reflects users’ interest in using the chatbot)

- Average number of interactions: the average number of times the chatbot interacts with a user. This data reflects the effort that users are putting into using the chatbot tool and whether or not the chatbot is meeting users’ needs.

- Non-response rate: the number of times that the chatbot has been unable to respond to user input due to lack of understanding.

Though the metrics above [21] are useful when it comes to regular monitoring and improving the effectiveness of a chatbot, a model’s evaluation should not be based on them alone. Depending on the market and the product itself, the metrics and indicators will vary and be continually adjusted until those most relevant are found and implemented. These metrics should be tracked and analyzed over a longer period of time so that the results can reflect how satisfaction rates evolve, for example. After compiling such data, add-ons, updates, and fixes can be developed, thereby bettering the chatbot.

6.2 Deployment

The final step is to officially deploy a chatbot, for example, by uploading it to a website. One can create a website by either using a website-generating tool, such as wix.com, or by manually coding one using HTML and CSS. Thecleverprogrammer.com is a reliable website that contains the code required for producing the base of a website.

After doing so, one should consider the following additions to improve the deployment process:

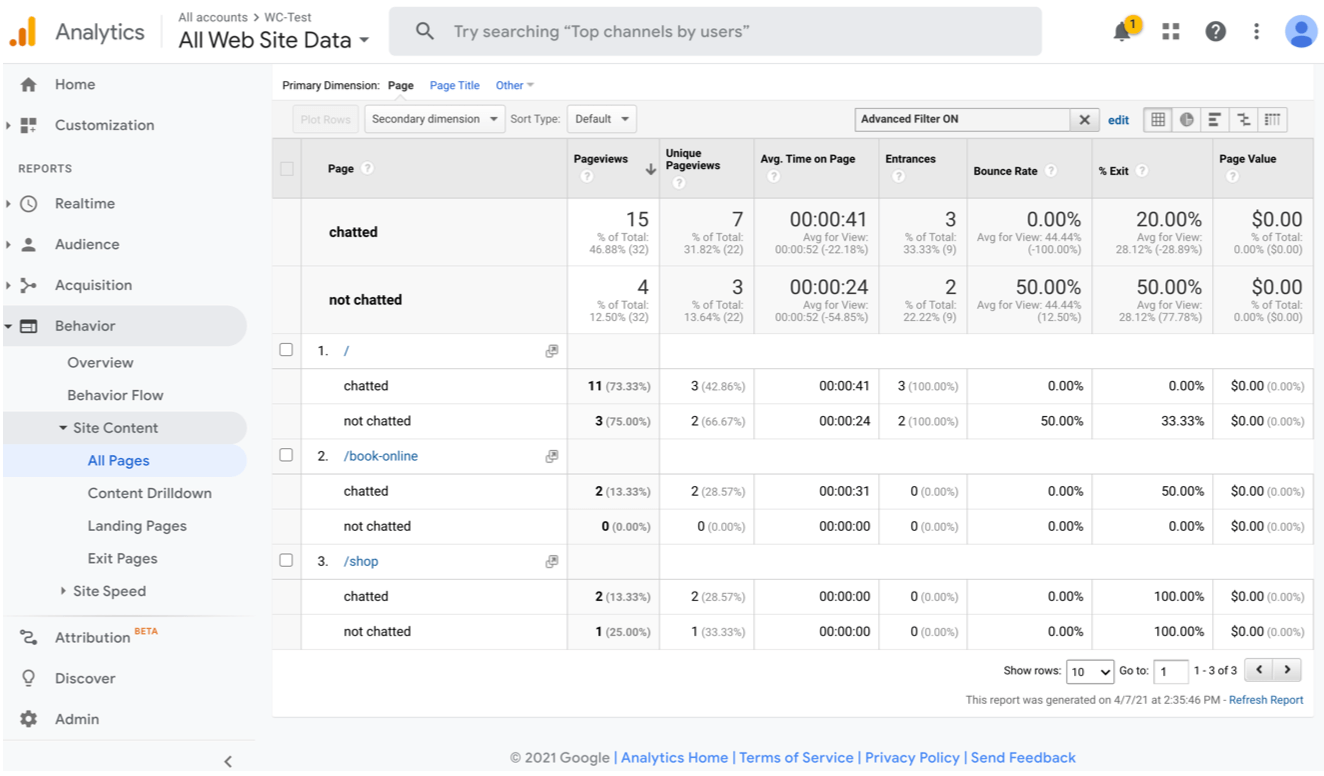

- Activity Tracking (using Google Analytics) to track users’ activity on the site. A good use for this feature would be to create a metrics table (see Figure 12) to evaluate a chatbot’s influence. [23]

- Securing a URL is a good idea as it allows people to promote the website more easily. After doing so, it is a good idea to use social media sites, such as Facebook, to attract users. Considering the type of website and chatbot is also incredibly important as it determines which audiences to target and then which mediums to use to do so.

Figure 12: Metrics Table generated by the Launch Website [23]

7. Our Proposal: SCIENTIFIC

Our Proposal is “SCIENTIFIC,” a learning platform that targets middle and high school students (Grades 6-12) and seeks to deliver Science material in a fun and fully interactive way. The curriculum consists of Biology, Chemistry, and Physics. SCIENTIFIC has both a website and a companion app; students have their own accounts, and teachers create classes on the website for their students to join with a class code.

SCIENTIFIC is a learning platform that has a video-based curriculum. Students progress through the video curriculum in a set order, with the length of each video ranging from 3-7 minutes. Additionally, every video pauses periodically to ask the student a question. There are a total of 3-5 questions per video, and all questions are pre-written by the student’s teacher, which allows SCIENTIFIC to cater to a teacher’s unique teaching objectives. When the student answers the question, instead of marking it as correct/incorrect, an AI-powered chatbot processes the student’s answer, and if incorrect, the chatbot guides them through a series of interactions until they arrive at the right answer, thereby helping the student achieve a solid understanding of the topic. Then the video continues. At the end of each video, a personalized cheat sheet/study guide will be formulated for the student containing the teacher’s questions from that video and the student’s final correct response. Additionally, SCIENTIFIC uses data analytics to create a diagnostic report of a teacher’s whole class, detailing the questions that students collectively took the longest to reach the right answer with the prompting of the AI chatbot. This feature helps teachers understand which topics or parts of topics their students find hardest, and thereby allows them to adjust their teaching accordingly.

After each chapter of the video curriculum is completed, the student participates in a Virtual Reality (VR) lab, using VR goggles and a remote controller. The lab tests students on their knowledge of the past chapter of videos and is fully interactive. There is also a collaboration aspect to the labs that allows multiple students to be in the virtual lab at the same time.

To cater to a wider range of students with different accessibility to companion products (VR + controller), there is also a version of the VR labs online, as a computer version of the VR, so as to make SCIENTIFIC more accessible!

Figure 13: SCIENTIFIC Logo

7.1 Distinctions of Our Chatbot

- Uses LSTM to collect information in the initial part of conversations to personalize the experience

- Uses NLP and Interaction Analytics to make chatbots come across as friendlier and more conversational

7.2 Course Material and Training

The videos are produced by the SCIENTIFIC team; however, the questions that students are asked during videos are written by their teacher(s). Our chatbot will be trained with data from numerous Biology, Chemistry, and Physics textbooks, relevant online public data sets, relevant educational slideshows, and text-based human-to-human interactions. Our chatbot will also undergo a Supervised Learning period. In order to allow it to produce fitting responses, not stiff pre-programmed ones, it will use NLP and DL. Teachers will also be able to upload extra content (images, diagrams, text, etc.) to the website.

7.3 Handling Inappropriate Input

If a user is inputting inappropriate or vulgar text into a chatbot conversation, the chatbot will ignore it and instead output information that is relevant to the content and that remains in context; however, if a user is persistent and continues to input inappropriate text, after ten times, they will see a time out message, informing the user that they are inputting inappropriate and/or vulgar text that does not have anything to do with the content and, like a password timeout, the chatbot will become unavailable to them for a short period of time.

Bibliography

[1] Dec. 2016. url: https://youtu.be/0xVqLJe9_CY.

[2] Mar. 2017. url: https://youtu.be/cfj6yaYE86U.

[3] June 2019. url: https://youtu.be/6M5VXKLf4D4.

[4] Aug. 2020. url: https://youtu.be/7t1z-ZsVUs0.

[5] url: https://www.google.co.uk/.

[6] AI Chatbot Software for Your Website. url: https://www.chatbot.com/.

[7] Rick Birkenstock. Chatbot Technology: Past, Present, and Future. 2018. url: https : / / www . toptal . com / insights / innovation / chatbot – technology – past – present – future.

[8] Jason Brownlee. How Much Training Data is Required for Machine Learning? May 2019. url: https : / / machinelearningmastery . com / much – training – data – required – machine-learning/.

[9] Bert Carremans. Handling overfitting in deep learning models. Jan. 2019. url: https: / / towardsdatascience . com / handling – overfitting – in – deep – learning – models – c760ee047c6e.

[10] IBM Cloud Education. AI vs. Machine Learning vs. Deep Learning vs. Neural Networks: What’s the Difference? May 2020. url: https://www.ibm.com/cloud/blog/ai- vs machine-learning-vs-deep-learning-vs-neural-networks.

[11] Jake Frankenfield. How Artificial Intelligence Works. July 2021. url: https : / / www . investopedia.com/terms/a/artificial-intelligence-ai.asp.

[12] Research Gate. 1: Relation Between A.I., ML and DL [13]. May 2018. url: https://www. researchgate.net/figure/Relation-Between-AI-ML-and-DL-13_fig2_325023664.

[13] Brett Grossfeld. Deep Learning vs. Machine Learning: What’s the difference? url: https: //www.zendesk.co.uk/blog/machine- learning- and- deep- learning/#:~:text= The%20difference%20between%20deep%20learning%20and%20machine%20learning,- In%20practical%20terms&text=While%20basic%20machine%20learning%20models, they%20still%20need%20some%20guidance.&text=A%20deep%20learning%20model% 20is,it%20has%20its%20own%20brain.

[14] Guru99. Back Propagation Neural Network: What is Backpropagation Algorithm in Ma chine Learning? url: https://www.guru99.com/backpropogation-neural-network. html.

[15] Computer Hope. Aug. 2020. url: https://www.computerhope.com/jargon/c/core. htm#:~:text=A%20core,%20or%20CPU%20core,eight%20cores,%20octa-core.

[16] JavaTPoint. Regression vs Classification in Machine Learning – Javatpoint. url: https: //www.javatpoint.com/regression-vs-classification-in-machine-learning.

[17] Archna Oberoi. The History and Evolution of Chatbots. 2020. url: https://insights. daffodilsw.com/blog/the-history-and-evolution-of-chatbots.

[18] Christopher Olah. “Understanding lstm networks”. In: (2015).

[19] Zeineb Safi et al. “Technical Aspects of Developing Chatbots for Medical Applications: Scoping Review”. In: Journal of medical Internet research 22.12 (2020), e19127.

[20] Rishabh Sharma. Deep Learning Activation Functions their mathematical implemen tation. May 2021. url: https : / / medium . com / nerd – for – tech / deep – learning – activation-functions-their-mathematical-implementation-b620d536d39b.

[21] unknown. “10 Key Metrics to Evaluate your AI Chatbot Performance”. In: (2021).

[22] unknown. “8 Ways to Improve Chatbots and Boost Customer Satisfaction”. In: (2021).

[23] unknown. “Deploy AI Chatbot”. In: (2021).

[24] unknown. “Technical Aspects of Developing Chatbots for Medical Applications: Scoping Review”. In: (2021).

[25] unknown. “Train and evaluate custom machine learning models”. In: (2021).

[26] Nishant Upadhyay. Chatbot Development: Why do Chatbots exist. Dec. 2020. url: https: //www.botreetechnologies.com/blog/chatbots-what-why-how/.